Fractal Fract, Free Full-Text

Por um escritor misterioso

Last updated 19 setembro 2024

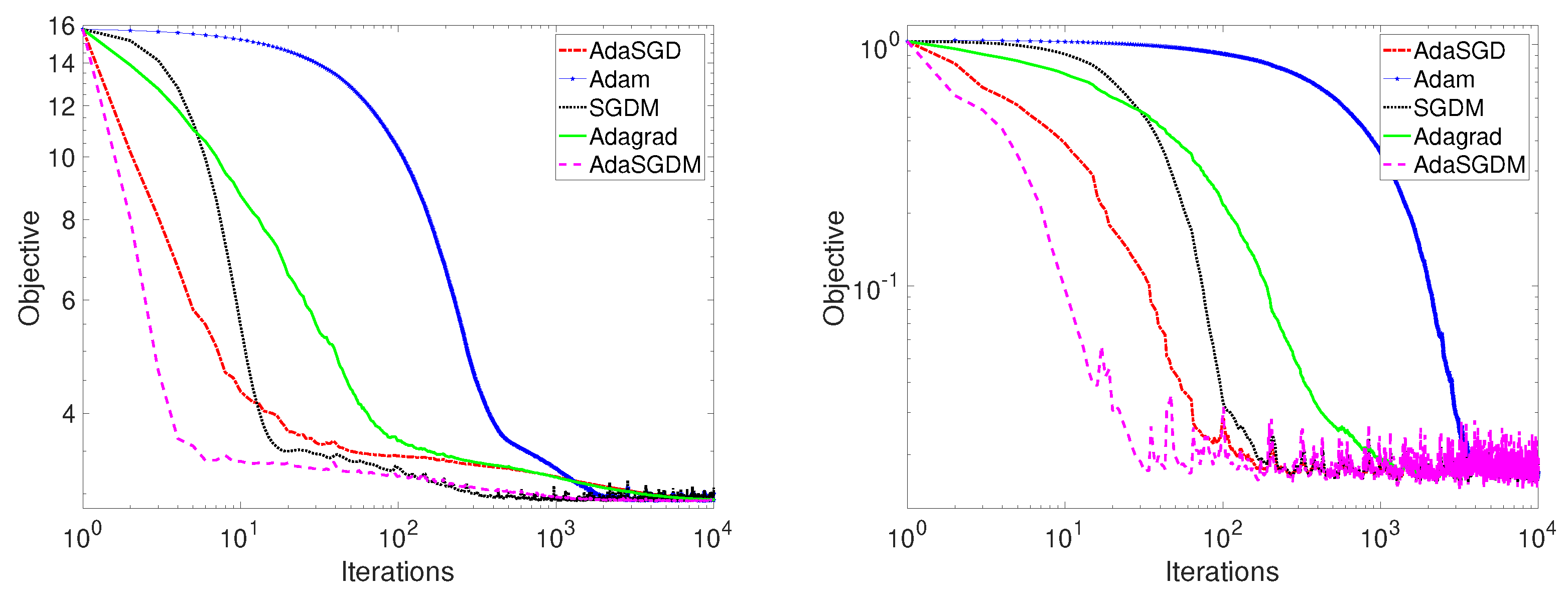

Stochastic gradient descent is the method of choice for solving large-scale optimization problems in machine learning. However, the question of how to effectively select the step-sizes in stochastic gradient descent methods is challenging, and can greatly influence the performance of stochastic gradient descent algorithms. In this paper, we propose a class of faster adaptive gradient descent methods, named AdaSGD, for solving both the convex and non-convex optimization problems. The novelty of this method is that it uses a new adaptive step size that depends on the expectation of the past stochastic gradient and its second moment, which makes it efficient and scalable for big data and high parameter dimensions. We show theoretically that the proposed AdaSGD algorithm has a convergence rate of O(1/T) in both convex and non-convex settings, where T is the maximum number of iterations. In addition, we extend the proposed AdaSGD to the case of momentum and obtain the same convergence rate for AdaSGD with momentum. To illustrate our theoretical results, several numerical experiments for solving problems arising in machine learning are made to verify the promise of the proposed method.

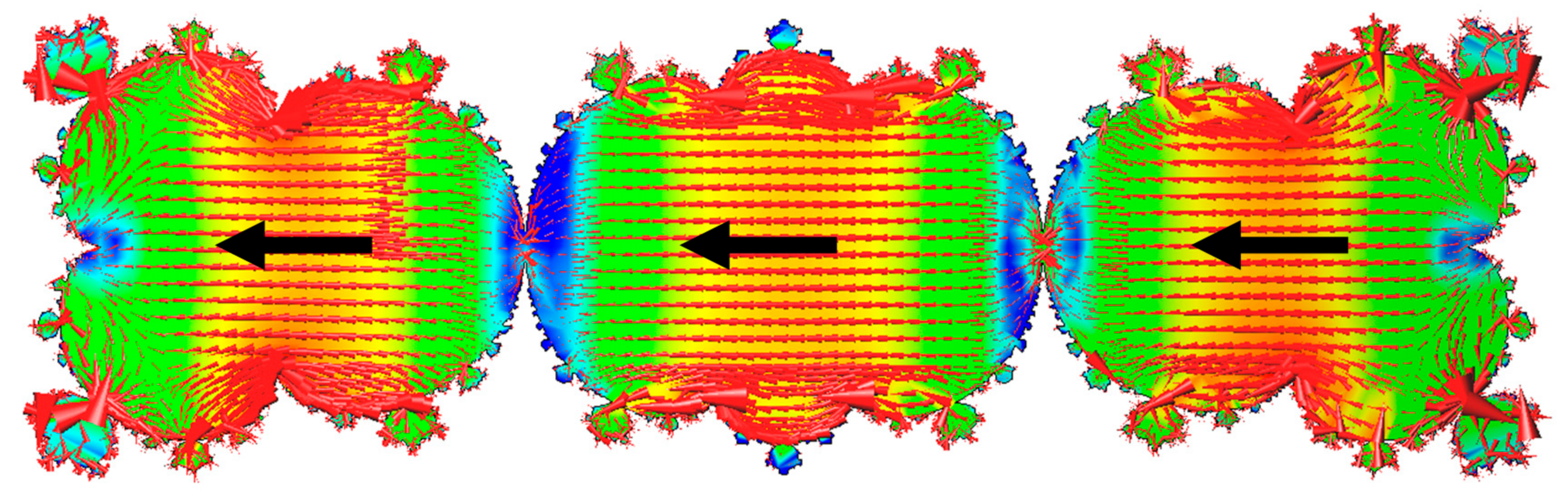

Thermodynamics and Characteristics of Heterogeneous Nucleation on Fractal Surfaces

Looking For Group - Guild Wars 2 Wiki (GW2W)

Fractal Filters: Glass filters for prism photography and video.

:max_bytes(150000):strip_icc()/dotdash_INV_final_Fractal_Indicator_Definition_and_Applications_Jan_2021-01-38e98076e9264b0c86b2051bdb76df27.jpg)

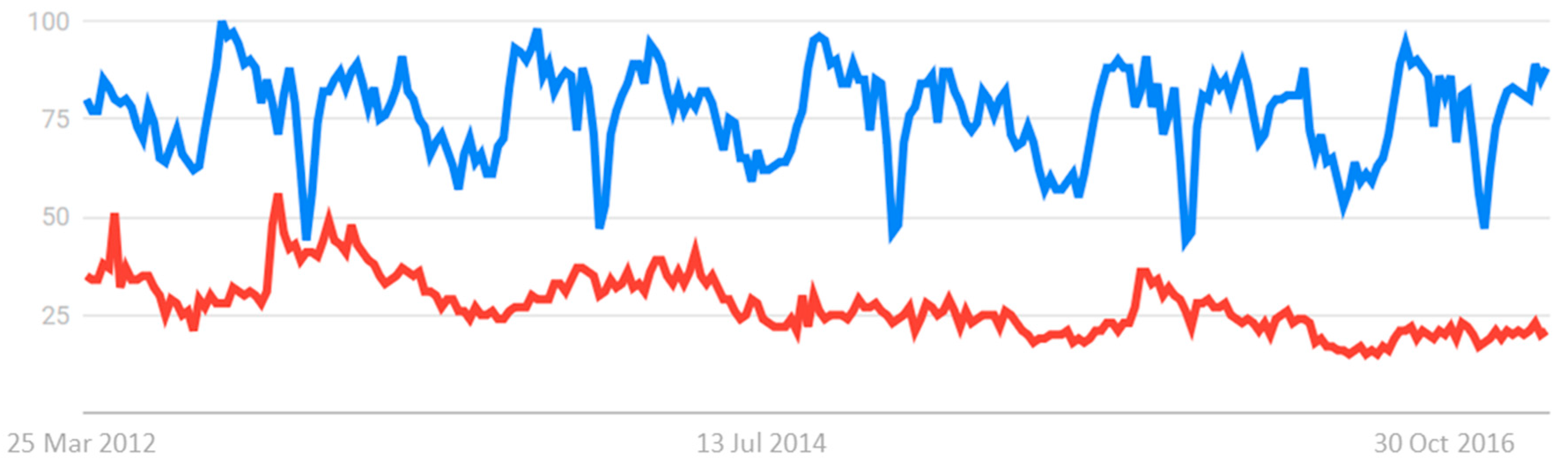

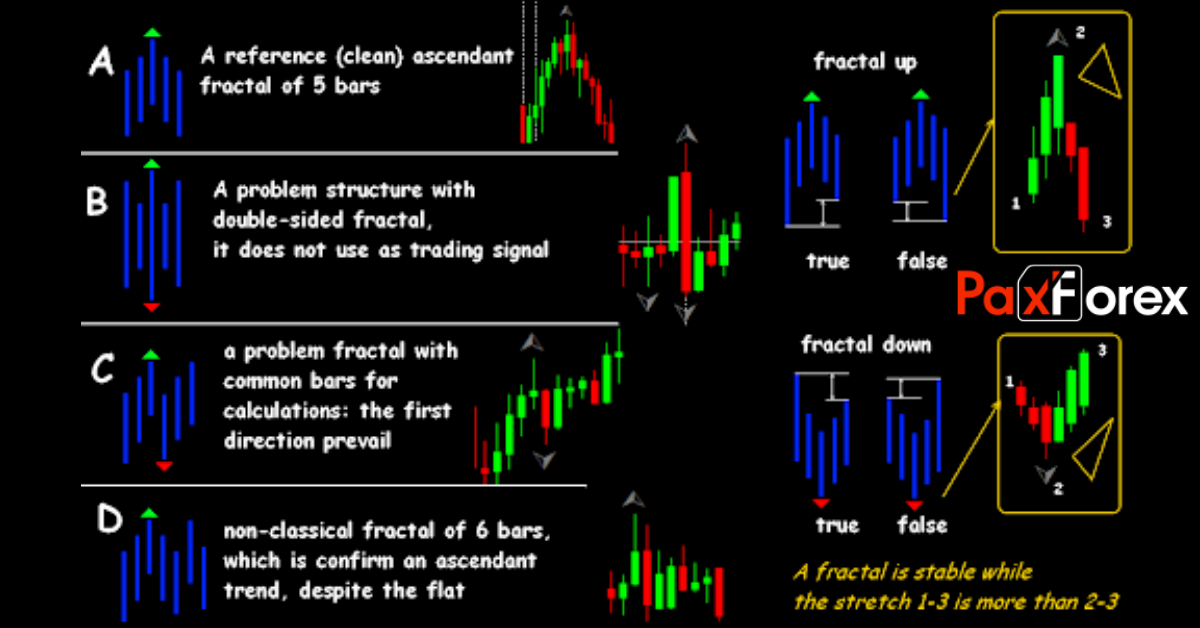

Fractal Indicator: Definition, What It Signals, and How To Trade

Fractal Chaos Bands Indicator Formula, Strategy - StockManiacs

Fractal Fract, Free Full-Text

Fractal Fract, Free Full-Text

Fractals and Brains (Part 1)

The Fractal Geometry of Nature: Benoit B. Mandelbrot: 9780716711865: : Books

What are Fractals in Forex Trading

Recomendado para você

-

Steepest Descent Method - an overview19 setembro 2024

Steepest Descent Method - an overview19 setembro 2024 -

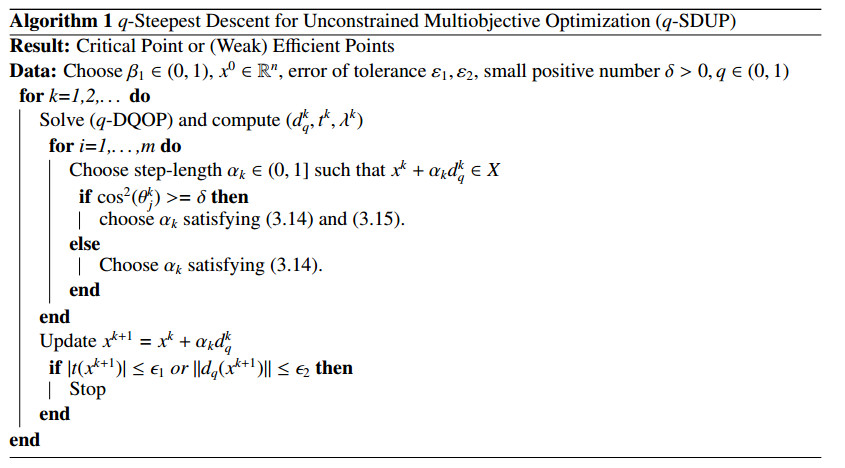

On q-steepest descent method for unconstrained multiobjective optimization problems19 setembro 2024

On q-steepest descent method for unconstrained multiobjective optimization problems19 setembro 2024 -

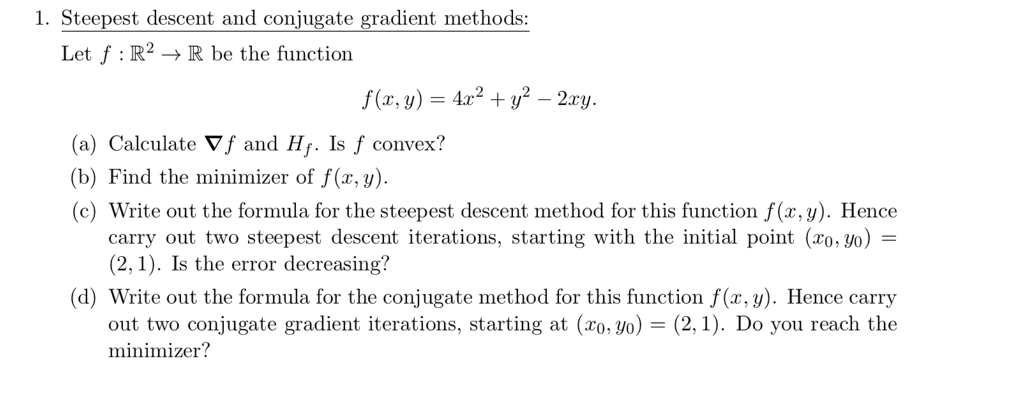

Solved 1. Steepest descent and conjugate gradient methods19 setembro 2024

Solved 1. Steepest descent and conjugate gradient methods19 setembro 2024 -

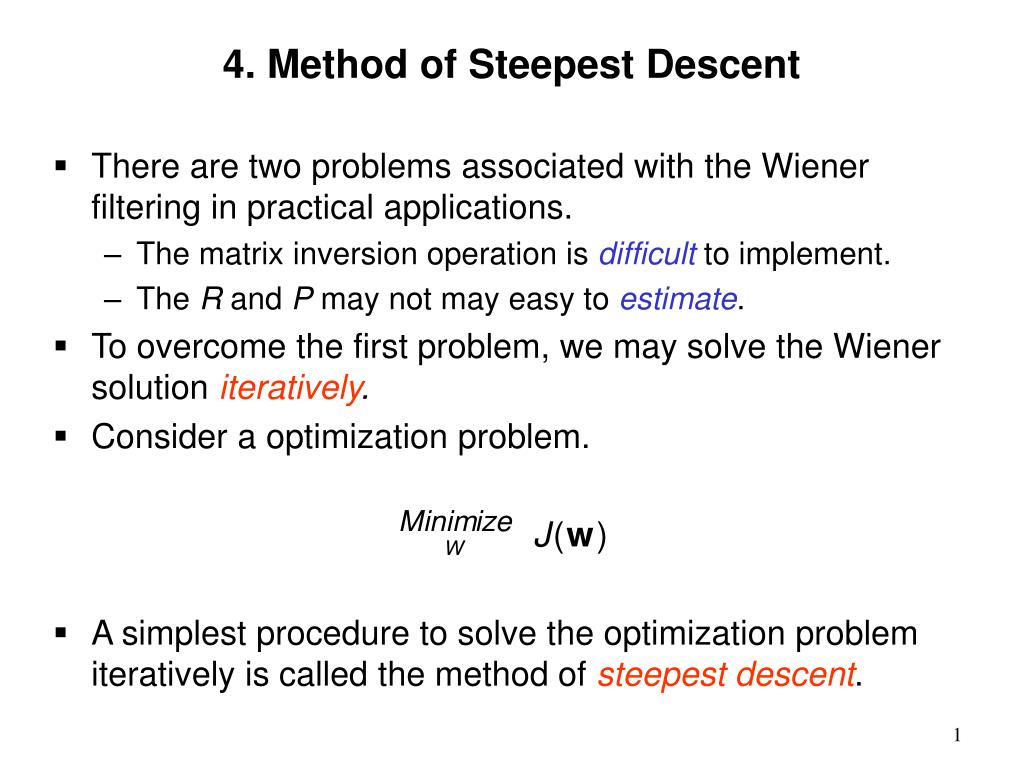

PPT - 4. Method of Steepest Descent PowerPoint Presentation, free download - ID:565484519 setembro 2024

PPT - 4. Method of Steepest Descent PowerPoint Presentation, free download - ID:565484519 setembro 2024 -

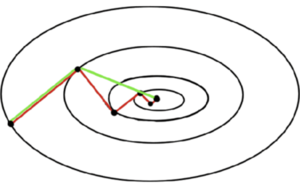

Guide to gradient descent algorithms19 setembro 2024

Guide to gradient descent algorithms19 setembro 2024 -

Conjugate gradient methods - Cornell University Computational Optimization Open Textbook - Optimization Wiki19 setembro 2024

Conjugate gradient methods - Cornell University Computational Optimization Open Textbook - Optimization Wiki19 setembro 2024 -

Chapter 4 Line Search Descent Methods Introduction to Mathematical Optimization19 setembro 2024

Chapter 4 Line Search Descent Methods Introduction to Mathematical Optimization19 setembro 2024 -

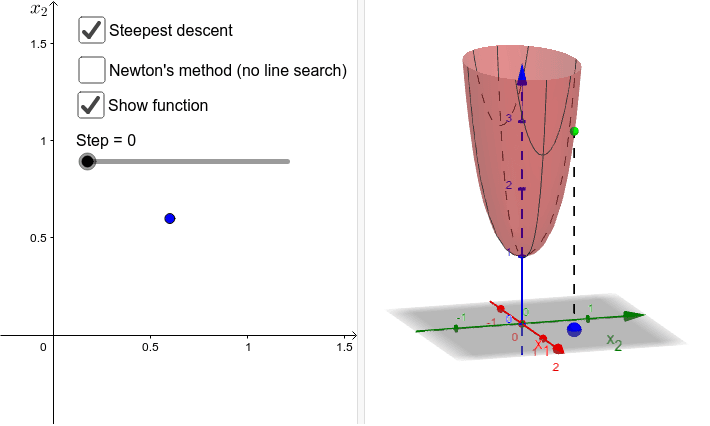

Steepest descent vs gradient method – GeoGebra19 setembro 2024

Steepest descent vs gradient method – GeoGebra19 setembro 2024 -

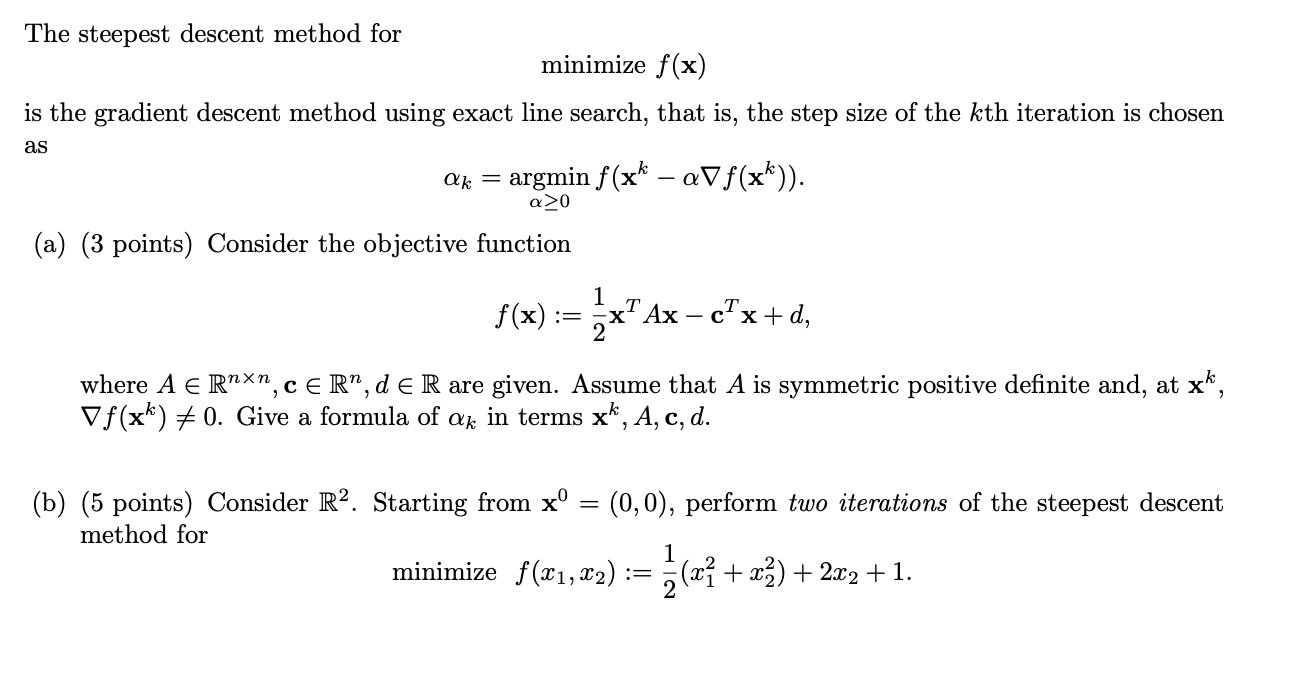

Solved The steepest descent method for minimize f(x) is the19 setembro 2024

Solved The steepest descent method for minimize f(x) is the19 setembro 2024 -

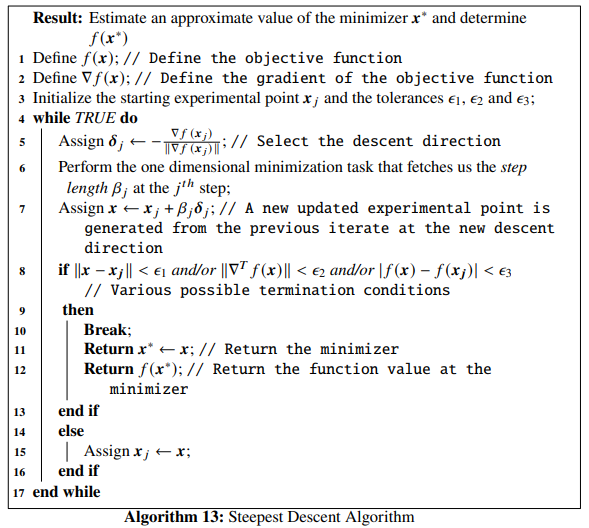

The Steepest Descent Algorithm. With an implementation in Rust., by applied.math.coding19 setembro 2024

The Steepest Descent Algorithm. With an implementation in Rust., by applied.math.coding19 setembro 2024

você pode gostar

-

how to watch blue beetle in |TikTok Search19 setembro 2024

-

Noragami Aragoto What Must be Done - Watch on Crunchyroll19 setembro 2024

-

Nice IP grabber : r/masterhacker19 setembro 2024

Nice IP grabber : r/masterhacker19 setembro 2024 -

Premier League de 2023–24 – Wikipédia, a enciclopédia livre19 setembro 2024

Premier League de 2023–24 – Wikipédia, a enciclopédia livre19 setembro 2024 -

:max_bytes(150000):strip_icc()/night-film-35a5c4cd56434ad69c9276969769f905.jpg) Night Film' by Marisha Pessl: See the chilling, cinematic trailer19 setembro 2024

Night Film' by Marisha Pessl: See the chilling, cinematic trailer19 setembro 2024 -

LoL Account With Obsidian Malphite Skin19 setembro 2024

LoL Account With Obsidian Malphite Skin19 setembro 2024 -

Sea King Jaw, King Legacy Wiki19 setembro 2024

Sea King Jaw, King Legacy Wiki19 setembro 2024 -

Minecraft: Story Mode turns up on Wii U this week19 setembro 2024

Minecraft: Story Mode turns up on Wii U this week19 setembro 2024 -

It Takes Two Sales Hit 10 Million Copies19 setembro 2024

It Takes Two Sales Hit 10 Million Copies19 setembro 2024 -

Clube Desportivo 1º de - Clube Desportivo 1º de Agosto19 setembro 2024